Contents

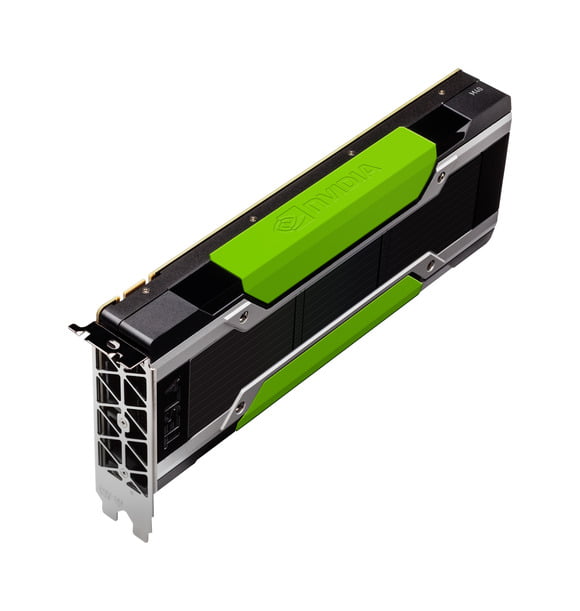

Nvidia’s Tesla M40, and its little brother, the M4, both announced Tuesday, are server-based graphics processors designed to process and provide more context for videos, said Ian Buck, vice president of the accelerated computing at Nvidia.

The GPUs will sit in servers where videos are stored and then served to computing devices over the Internet. Software models, analytics and algorithms assist the machine-learning GPUs in classifying, tagging and resizing images.

Known for graphics processors, Nvidia already has a reputation for improving the gaming experience on PCs and mobile devices. The company’s graphics products also help drive research on the world’s fastest supercomputers. The Tesla M40 and M4 are Nvidia’s first GPUs specifically for hyperscale servers, which primarily are used for Web hosting and serving.

Nvidia has also been involved in developing “deep learning” models, most notably for vehicles. The company has said its Tegra X1 mobile chip could help build self-driving cars, which could recognize objects, signs, images, lanes and other things.

The importance of adding context to videos and images is also growing, Buck said. Machine learning to help add information attached to videos could improve the accuracy of search results. Machine-learning processors could also power software models designed to analyze videos and images, Buck said.

Billions of videos are being served every day through sites like Youtube and livecast services like Periscope. The videos will look better and shake less with help from the new GPUs, Buck said.

A batch of eight M40 GPUs can be used on high-performance servers where videos and images could pass through algorithms and analytics tools. Video could then be served through hyperscale servers with Tesla M4 GPUs, where post-processing could help enhance color, resize and stabilize videos.

The Tesla M40 has 3072 cores, offers a peak performance of 7 teraflops, has 5GB of GDDR5 memory and draws 250 watts of power.

The smaller M4 is designed for servers based on OpenCompute Project specifications, which are mainly designed for hyperscale environments. The M4 delivers 2.2 teraflops of performance, draws just 50 to 75 watts of power and has 4GB of GDDR5 memory.

The GPUs will be loaded by server makers into products, and won’t be available directly through stores.

[“source -pcworld”]